|

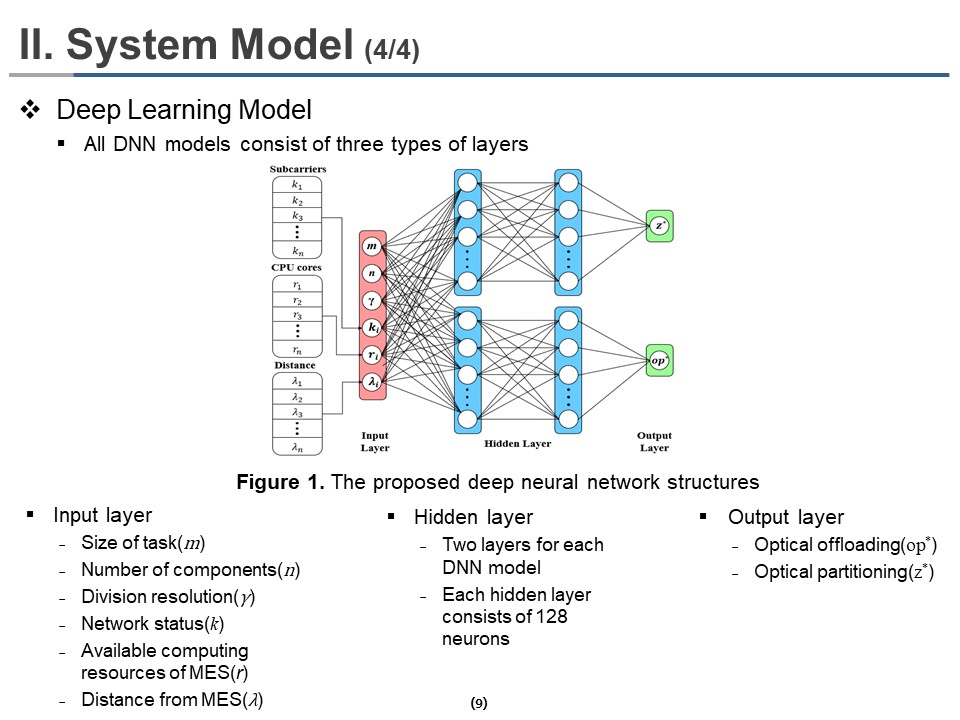

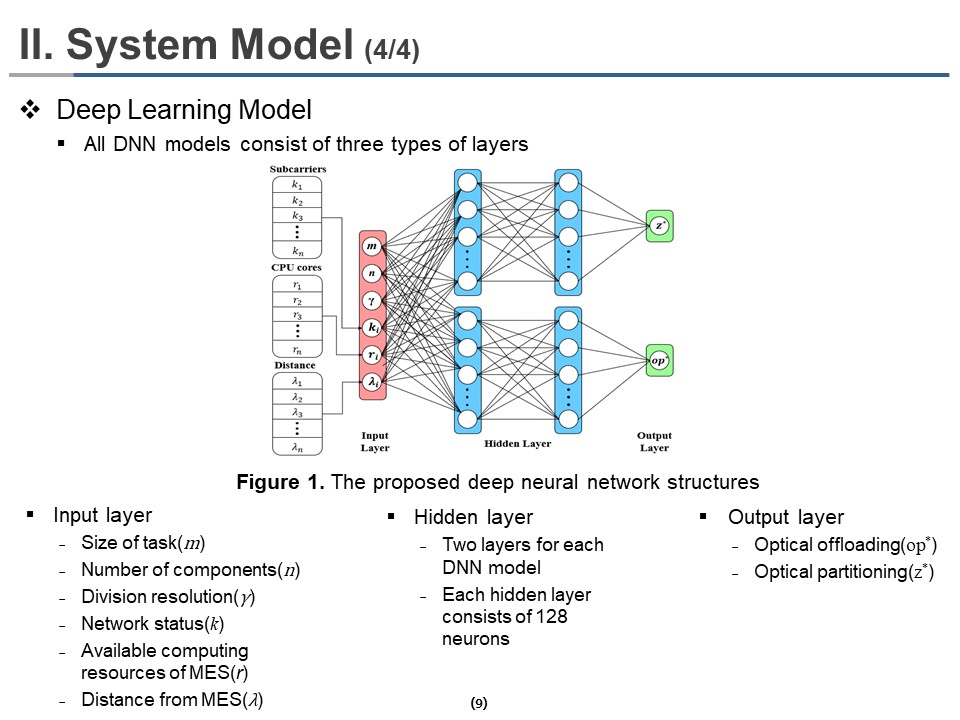

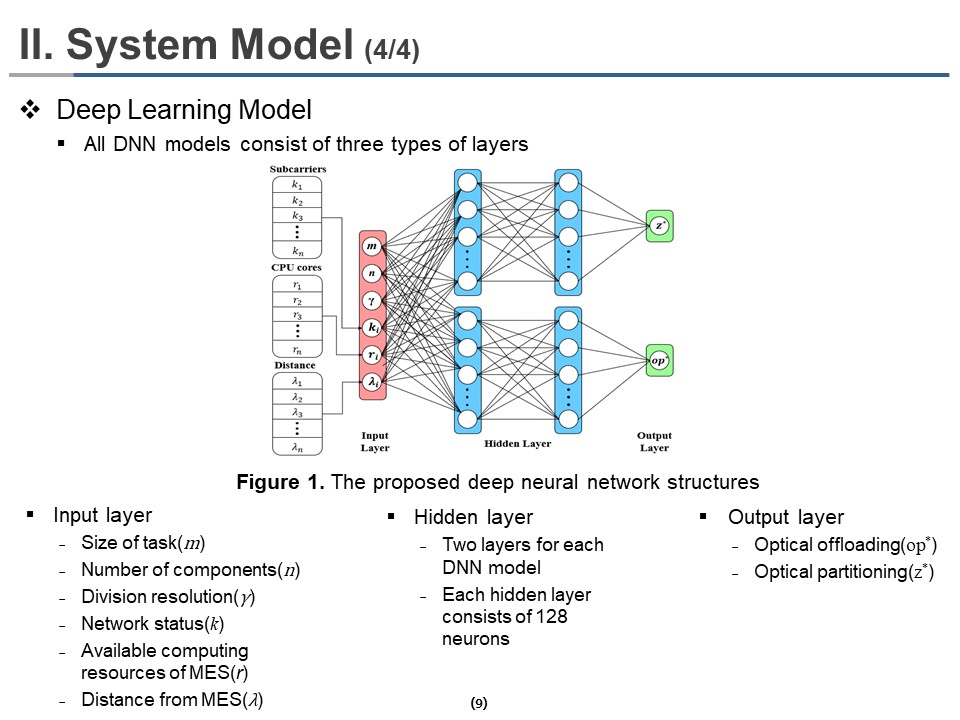

All DNN models consist of three types of layers. It consists of an input layer, a hidden layer, and an output layer. The input layer consists of the information about the size of the task (m), the number of components (n), division resolution (Ąă), network status (k), available computing resources of MES (r), distance from MES (Ąë). The hidden layer consists of two layers for each deep neural network model. Each hidden layer consists of 128 neurons. Each of the two output Layers provides different information, one output layer for optical offloading policy(op*) and another for optimal partitioning(z*). Overall DNN structures are shown in Figure 1.

Therefore, 21 neurons in the input layer; a neuron for task size, a neuron for the number of components per task, a neuron for division resolution, six neurons for random distances during mobility of UEs in the execution of each component, six neurons for subcarriers during the execution of each component, and six neurons for CPU cores assigned to each component. Similarly, six neurons for offloading policy and six neurons for partitioning are reserved in each output layer. The ReLU and Softmax activation functions are used for the hidden and the output layers, respectively.

|

IEEE/ICACT20220171 Slide.09

[Big Slide]

[YouTube]

IEEE/ICACT20220171 Slide.09

[Big Slide]

[YouTube]  Oral Presentation

Oral Presentation

IEEE/ICACT20220171 Slide.09

[Big Slide]

[YouTube]

IEEE/ICACT20220171 Slide.09

[Big Slide]

[YouTube]  Oral Presentation

Oral Presentation