|

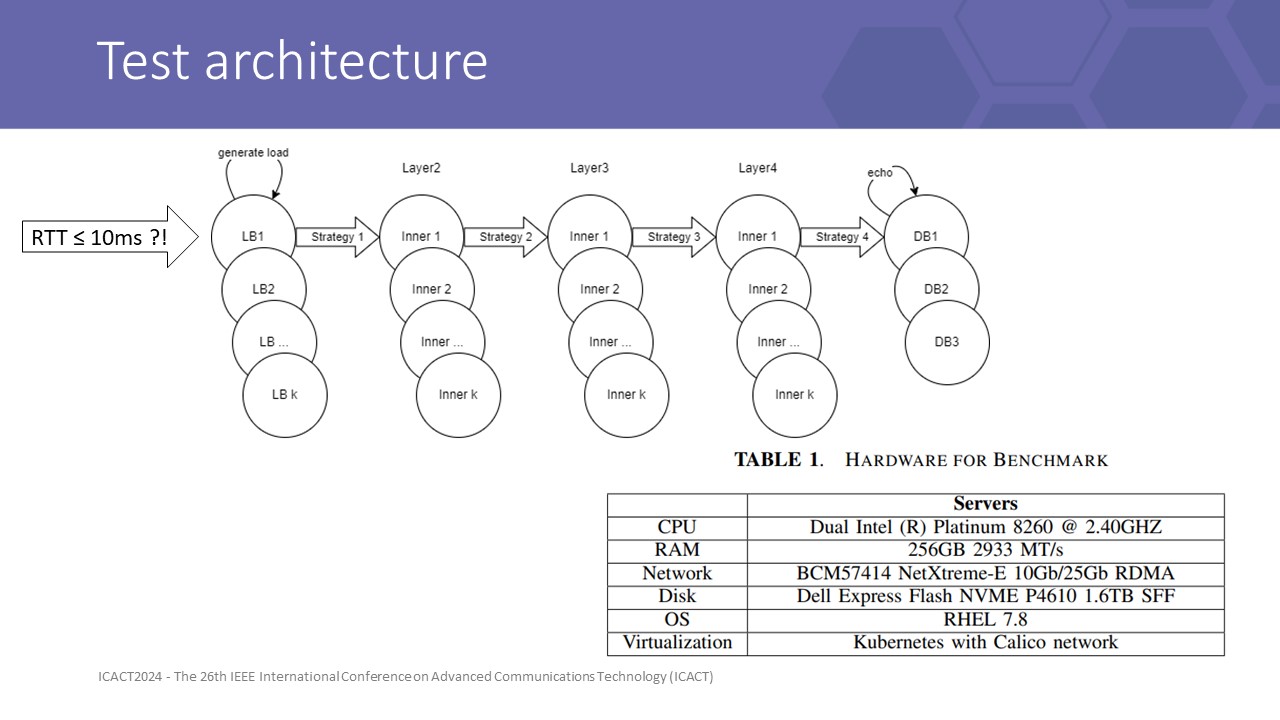

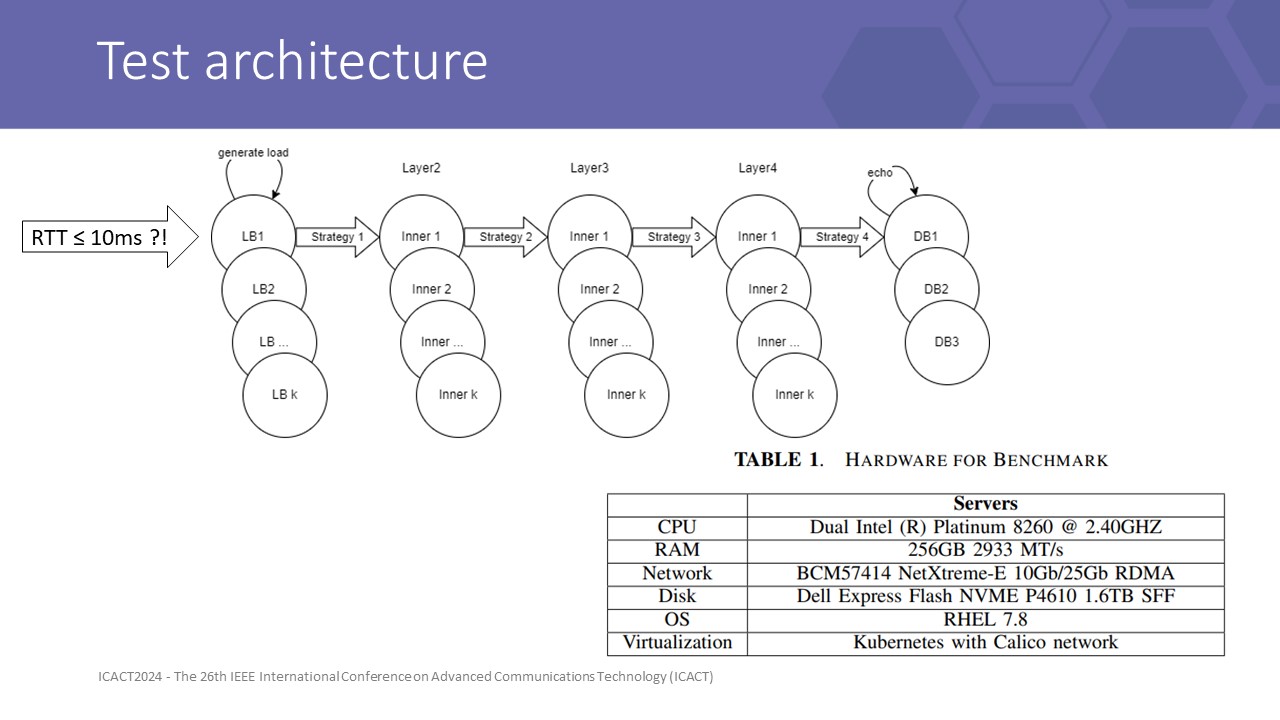

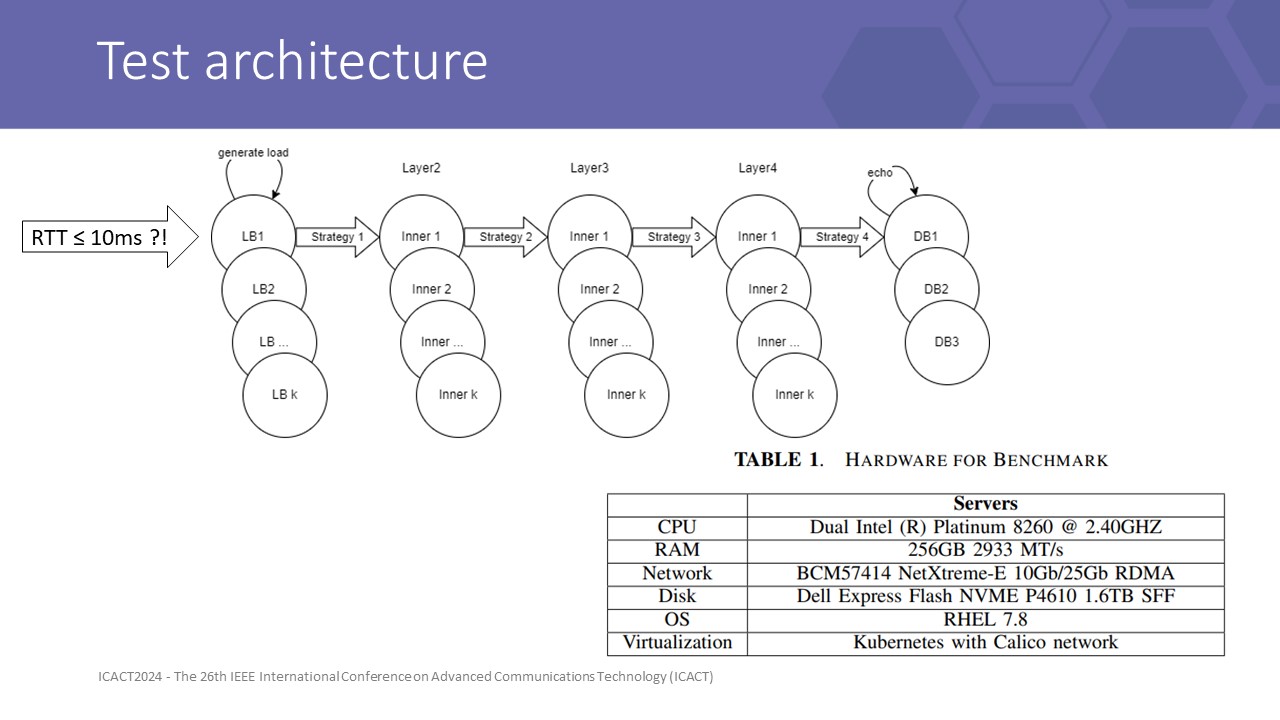

Our testbed is designed as shown on the screen.

the database is simulated by an echo server.

Each inner application is a homogeneous single-flow instance layer. API gateway (LB) is a modified application that has generator threads, that generate random fields 4KB messages in ByteBuf format into LB incoming queue.

Each service layer is deployed into 1 specific non-overlapping physical node to force all requests must travel over the physical network rather than some travel internal by localhost. URLLC defines 1-way latency for data planes less than 1 ms. Within 5 layers, we have 4 inner connected layers, with 2-way connections to an external (source) system. Therefore, 10ms is the upper bound of the transmit latency.

We record log at multiple layers and conclude by results at LB to give us a view of front-line queue status and send cycle time statistics (over client component of LB) as the latency a physical LB must wait: RTT = InSQ:avgTime + SendCy:avgTime.

We divided our scenarios into 2 dimensions:

• Sizing dimention: 2x 3x 4x 6x 8x with 1x = 1 vCore CPU + 1.5GB physical memory. With each scale, application configures like handleThread, Xmx, CPU factor, ... scaled proportionally.

• Load dimension: underload/highload.

To archive 99% of the confidence level at a 5% margin error of unlimited population, we collect 666 data samples with 1s windows since the first 100 skipped, matched timestamps from data points spanned among layers instances.

Log4j logging is enabled for statistics and errors. Statistics is accounting per interval (1s) by Windows.

|

IEEE/ICACT20240417 Slide.21

[Big Slide]

IEEE/ICACT20240417 Slide.21

[Big Slide]

Oral Presentation

Oral Presentation

IEEE/ICACT20240417 Slide.21

[Big Slide]

IEEE/ICACT20240417 Slide.21

[Big Slide]

Oral Presentation

Oral Presentation